In October 2025, Reflection AI raised $2B at an $8B valuation. It’s an objectively impressive round that signals continued investor conviction in the AI infrastructure layer. Congrats to Misha Laskin, Ioannis Antonoglou, and the entire team.

But in spite of the funding, there are three major challenges with open weight models in the enterprise that have yet to be figured out:

Challenge #1: The Fine-Tuning Treadmill

Here's the typical enterprise buyer journey with open-weight models:

- Enterprise licenses open-weight model

- Spends 6 months and $500K fine-tuning for their specific use case

- Base model updates with performance improvements

- Fine-tune breaks or degrades

- Enterprise faces a choice: stay on old version or re-train

This seems manageable until you factor in the security dimension.

The enterprise security team discovers a CVE (Common Vulnerabilities and Exposures) in the old model version. Now there’s a compliance mandate to upgrade. The fine-tune rebuild that was optional becomes mandatory, but now it’s happening on a compressed timeline driven by security deadlines rather than business readiness.

The core problem is that open-weight providers are selling rapid iteration to buyers who optimize for the opposite.

Show me the Fortune 500 CIO who wants to be in the model maintenance business. They don’t exist. They want to buy outcomes, not science projects.

The entire pitch assumes customers want to own the AI stack. But what most enterprises actually want is to own their data, not a vendor's weights.

What This Means for Adoption

Without strong version pinning guarantees, backward-compatibility commitments, or portability of fine-tunes across model versions, enterprises face:

- Unpredictable re-training costs every time the base model ships an update

- Performance degradation risk when forced to upgrade

- Resource allocation uncertainty because the AI team never knows when the next forced rebuild will happen

This creates an adoption barrier that has nothing to do with model quality and everything to do with operational predictability.

Possible emerging solutions to this like Mira Murati's Thinking Machines which allows for fine-tuning of open-weight models through APIs.

Challenge #2: On-Prem is Borrowed Time, Not A Moat

Every open-weight pitch I hear includes some variation of: “We deploy in your VPC. They don’t.”

But in reality, this enterprise anxiety evaporates relatively quickly. Just look at what happened with SaaS in the previous decade.

How Enterprises Adopted SaaS

In 2010, banks refused to put anything in the cloud. “Our data needs to stay on-premises for security and compliance,” they said.

Then a few things happened:

- AWS achieved FedRAMP certification (2013)

- Salesforce achieved HIPAA compliance (2014)

- Major financial institutions started running Workday (2015)

- Those same paranoid banks moved half their stack to SaaS (2020)

Usually, the pattern goes something like this:

- New technology emerges

- Enterprises demand on-prem/private deployment

- SaaS providers check compliance boxes (FedRAMP High, HIPAA, SOC2)

- Enterprise anxiety dissipates

- SaaS becomes the default

On-prem deployment is a 24-36 month wedge, not a lasting moat.

Unless Reflection AI and others can prove technical advantages that fundamentally cannot be delivered via API, they are selling temporary anxiety relief with an expiration date.

What Happens When OpenAI Gets FedRAMP High?

Billions are getting deployed on a value proposition that evaporates the moment closed-source model providers achieve the same compliance certifications.

The question isn’t whether on-prem deployment matters today. It does. The question is whether it matters when:

- Anthropic has FedRAMP High

- OpenAI has HIPAA compliance

- Google Cloud AI has SOC 2 Type II

At that point, what’s the differentiation? If it’s purely “you can see the weights,” you’re betting that enterprises value transparency over everything else. History suggests they don’t. They value outcomes.

Challenge #3: Vertical AI is the Real Competition

While foundation model labs are raising billions to build horizontal models, something else is happening in the market.

Domain-specific AI companies are quietly eating enterprise budgets.

The Vertical AI Landscape

Legal & Finance

Harvey: Training on actual legal briefs, motions, and case law

Xyla: Training on financial documents and regulatory filings

Defense & National Security

Palantir: Building AI on defense workflows and intelligence data

Shield AI: Training on tactical scenarios and mission planning

Anduril: Developing AI for autonomous defense systems

Healthcare

Hippocratic AI: Training on clinical workflows and patient protocols

Abridge: Training on medical conversations and documentation

What makes them different?

These companies are competing competing on domain understanding, rather than model quality.

A horizontal foundation model (open or closed) walks into healthcare and immediately hits a wall:

- It doesn’t understand clinical workflows

- It doesn’t know HIPAA-compliant documentation patterns

- It hasn’t been trained on actual patient-provider interactions

- It can’t navigate the regulatory complexity of healthcare billing

Meanwhile, Hippocratic AI has been ingesting HIPAA-compliant patient data for three years. It’s not even close.

Domain Expertise Compounds & Model Capabilities Don’t

The value in AI is the context the model has absorbed.

A general-purpose model can be technically superior and still lose to a domain-specific model that:

- Understands industry jargon and abbreviations

- Knows the regulatory frameworks

- Has trained on actual workflows from that industry

- Can navigate the political and organizational dynamics

This is why I think horizontal open-weight models face an uphill battle in enterprise.

By the time they fine-tune enough to match the domain understanding of vertical AI, they’ve spent months and hundreds of thousands of dollars. And the vertical AI company just shipped another update trained on fresh domain data.

What Problem Are We Actually Solving?

The open-weight narrative is intellectually satisfying but commercially confused.

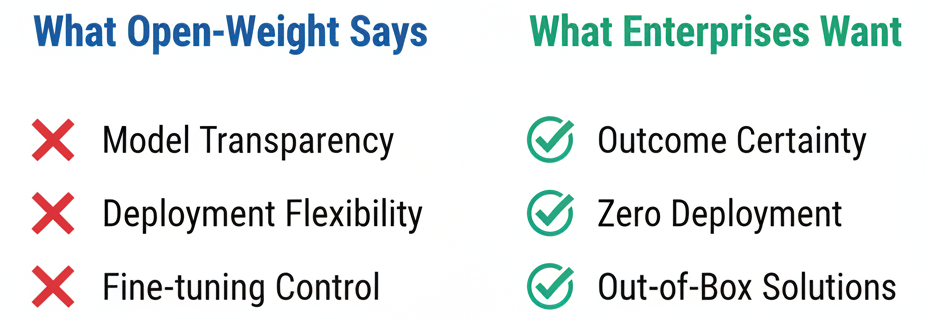

What Open-Weight Advocates Claim Enterprises Want:

- Model transparency - “We need to see the weights to ensure safety”

- Deployment flexibility - “We need to run it in our VPC”

- Fine-tuning control - “We need to customize it for our use case”

What Enterprises Actually Want:

- Outcome certainty - “Will this solve our problem?”

- Zero deployment burden - “Can we just API call it?”

- Solutions that work out-of-box - “Do we have to become AI experts to use this?”

There’s a massive gap between these two lists.

Open-weight models solve problems enterprises don’t have while missing the ones they do.

When Do Open-Weight Models Make Sense?

There are scenarios where open-weight models make economic sense for enterprises:

Scenario 1: Extremely High Volume Use Cases

If you’re processing billions of API calls per month, the economics of running your own infrastructure vs. paying per-token to a SaaS provider can flip.

Example: A large e-commerce company doing real-time product recommendations might save millions annually by running their own model.

But this is a tiny percentage of enterprise buyers.

Scenario 2: Highly Regulated Industries with Data Residency Requirements

Some industries (defense, intelligence, certain healthcare applications) have legal requirements that data cannot leave certain jurisdictions or networks.

For these buyers, on-prem is a mandate, at least for now, until their compliance requirements change to allow for closed models.

But over time, the market share for these industries diminishes as trust in closed SaaS models grows.

Scenario 3: Research Institutions and Model Developers

Universities, research labs, and companies building AI products on top of foundation models genuinely benefit from open weights because of their customizability and the opportunity for to achieve explainability.

But these are not traditional enterprise buyers. They are developers, not end users.

How open-weight models may play out

My guess is that in the next few years will likely follow a predictable pattern.

Pilots & Early Success: In the near term, we'll see a honeymoon period where open-weight models continue attracting capital at high valuations. Enterprises will run pilots and proof-of-concepts, early adopters with sophisticated AI teams will achieve legitimate success, and the prevailing narrative will remain "open weights are the future of enterprise AI."

Reality Sets In: Then, reality will set in. Enterprises that adopted open-weight models will face the burden of fine-tuning maintenance and dealing with breaking changes, security mandates, and version upgrades. Meanwhile, SaaS AI providers will have achieved FedRAMP and HIPAA compliance, eliminating the compliance advantage that drove on-prem deployments. Vertical AI companies will close major enterprise contracts by offering domain-specific solutions that just work. Board-level conversations will shift from "Do we need open weights?" to "Why are we maintaining this infrastructure?"

The Market Reshuffle: The market will reshuffle into one of three outcomes.

(1) Open-weight providers might pivot to become infrastructure and tooling companies, the "AWS of AI", providing the platform layer while others build applications on top.

(2) We could see vertical consolidation where open-weight companies acquire or partner with vertical AI firms to offer domain-specific solutions.

(3) We might see open-weight models become commoditized infrastructure that powers applications, but all the economic value accrues to the application layer rather than the model layer.

My Open Questions

If you work at or invest in open-weight AI companies, I’d love to hear your perspective on:

- How do you solve the fine-tuning maintenance problem at scale?

- What’s your response when SaaS providers achieve the same compliance certifications?

- How do you compete with vertical AI that has years of domain-specific training data?

- What percentage of Fortune 500 companies actually want to be in the model operations business?

- Is there a path where open weights win that I’m not seeing?

Why the open-weight model question matters

The enterprise buyers I talk to don’t care whether the model is open or closed. They care whether it solves their problem without them having to become AI experts.

And right now, the companies best positioned to do that are not the ones with the best foundation models but rather the deepest domain expertise.

I’m genuinely looking for perspectives that challenge this analysis. Have thoughts on the open-weight enterprise thesis? Let me know.